Running open-notebook on M1 Max Without Docker or Cloud APIs, and Letting qwen3.6:35b Read Its Own Article

Contents

Contents

open-notebook is an open-source implementation of Google’s NotebookLM. It ingests PDFs and web articles, then lets you chat, summarize, and even generate podcasts from them. The tagline is “A private, multi-model, 100% local, full-featured alternative to Notebook LM”.

But the standard install instructions in the README assume Docker Compose, and the first thing they push you toward is registering OpenAI or Anthropic API keys. Calling that “100% local” is a stretch — as shipped, you get shackled to Docker, and the moment you use a provider API your data leaves the machine.

So on my M1 Max 64GB, I ran it with these constraints:

- No Docker (SurrealDB runs as a native binary)

- No cloud API key registered at all (Ollama only)

On top of that, I fed it the Qwen3.6-27B Dense vs 35B-A3B MoE comparison article I had written this morning, and asked the chat LLM itself (qwen3.6:35b) to tell me about its own benchmark numbers.

Environment

| Item | Value |

|---|---|

| Machine | M1 Max 64GB (unified memory) |

| OS | macOS Darwin 25.3.0 |

| open-notebook | v1.8.5 |

| SurrealDB | 2.6.5 (native) |

| Ollama | 0.20.6 |

| Chat Model | qwen3.6:35b (23GB, 4bit GGUF, MoE) |

| Embedding Model | bge-m3:latest (1.2GB, multilingual) |

| Frontend | Next.js 16.2.3 (:3000) |

| Backend API | FastAPI (:5055) |

| Worker | surreal-commands-worker |

Four processes need to stay up, so I just used a tmux session with four panes.

Architecture

open-notebook has a Python backend, a Next.js frontend, a SurrealDB database, and a worker process that handles async tasks separately. docker-compose.yml normally takes care of all of that, but if you run it yourself, this block diagram is worth keeping in mind.

flowchart LR

Browser[ブラウザ] -->|:3000| Frontend[Next.js frontend]

Frontend -->|/api/* proxy| Backend[FastAPI backend :5055]

Backend -->|ws :8000/rpc| DB[(SurrealDB v2)]

Backend -->|command enqueue| DB

Worker[surreal-commands-worker] -->|poll| DB

Worker -->|chat/embed| Ollama[Ollama :11434]

Backend -->|chat/embed| OllamaThe worker exists because source ingestion — “fetch body → chunk → generate embeddings → generate summary” — takes a long time. The API doesn’t wait synchronously; it just returns a command ID.

Standing it up without Docker

open-notebook’s only real Docker dependency is SurrealDB. SurrealDB itself is a single Rust binary, so it runs natively.

1. Install SurrealDB v2

Homebrew’s surrealdb/tap/surreal gives you the latest v3 series. open-notebook assumes v2, so pin the version through the official installer.

curl -sSf https://install.surrealdb.com | sh -s -- --version v2.6.5It lands at ~/.surrealdb/surreal. Add it to PATH or just invoke it directly.

2. open-notebook itself

cd ~/projects

git clone https://github.com/lfnovo/open-notebook.git

cd open-notebook

uv sync

uv pip install python-magic

brew install libmagic # backend for python-magicpython-magic is a file type detection library whose backend needs the libmagic shared library. Without brew installing it, you’ll hit failed to find libmagic at import time.

3. .env setup

Just replace the encryption key with something strong. API keys get registered via the UI later, so don’t put them in .env.

cp .env.example .env

# Set OPEN_NOTEBOOK_ENCRYPTION_KEY to a strong value

sed -i '' "s/^OPEN_NOTEBOOK_ENCRYPTION_KEY=.*/OPEN_NOTEBOOK_ENCRYPTION_KEY=$(openssl rand -hex 32)/" .env

# Change SURREAL_URL from docker DNS name to localhost

sed -i '' 's|^SURREAL_URL=.*|SURREAL_URL=ws://localhost:8000/rpc|' .envOPEN_NOTEBOOK_ENCRYPTION_KEY is the key used to encrypt the API credentials stored in the DB with Fernet (AES-128-CBC + HMAC-SHA256). Weaken it and you risk plaintext exposure, so make it a 32-byte random hex.

4. Frontend dependencies

cd frontend && npm install5. Launch the four processes in tmux

tmux new-session -d -s opennb -c ~/projects/open-notebook

# Pane 0: SurrealDB

tmux send-keys -t opennb:0 'export PATH=$HOME/.surrealdb:$PATH && \

surreal start --log info --user root --pass root \

rocksdb:./surreal_data/db.rocksdb' Enter

# Pane 1: API

tmux split-window -t opennb:0 -v

tmux send-keys -t opennb:0.1 'uv run --env-file .env run_api.py' Enter

# Pane 2: Frontend

tmux split-window -t opennb:0.1 -h -c ~/projects/open-notebook/frontend

tmux send-keys -t opennb:0.2 'npm run dev' Enter

# Pane 3: Worker

tmux split-window -t opennb:0 -h

tmux send-keys -t opennb:0.3 'uv run --env-file .env surreal-commands-worker \

--import-modules commands' EnterPort layout.

| Process | Port |

|---|---|

| SurrealDB | 8000 |

| FastAPI | 5055 |

| Next.js | 3000 |

| Worker | (just waits on DB LIVE queries, no port) |

Open http://localhost:3000 in the browser and you’re running. The frontend proxies /api/* to :5055, so day to day you only ever touch :3000.

Safety sanity check

Before handing it any API keys, I skimmed the repo for anything suspicious.

| Aspect | Finding |

|---|---|

| Outbound calls | No traffic outside LLM providers. I grepped all requests.post, httpx.post, and fetch( — no telemetry to the author’s servers |

| API key handling | Encrypted with Fernet and stored in the DB. No log statements that leak the key |

| Arbitrary code execution | No eval, exec, subprocess, or os.system usage |

| Frontend | dangerouslySetInnerHTML only for a static theme script. Low XSS risk |

| Dependencies | Five packages authored by the project owner (esperanto, content-core, ai-prompter, podcast-creator, surreal-commands) are on official PyPI and traceable on GitHub. Normal OSS-level risk |

For purely local use, it looked fine. If you want to expose it publicly, you’ll also need to set OPEN_NOTEBOOK_PASSWORD.

Hooking up Ollama

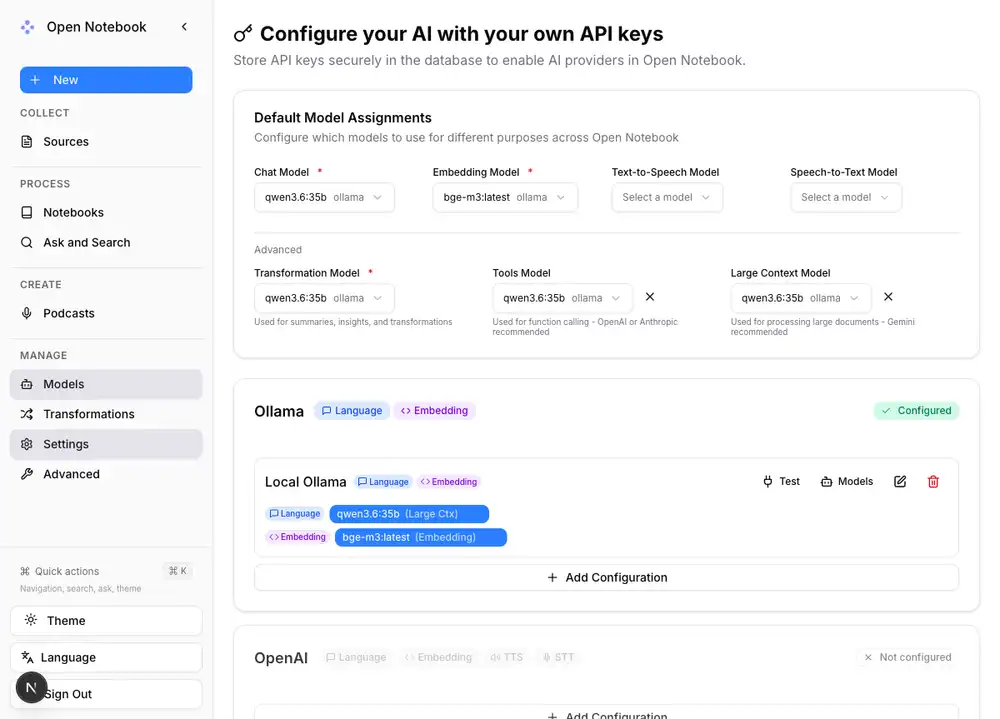

The first screen is /notebooks. Jump to API key management via Manage → Models in the left sidebar.

Among the 18 providers, under the Ollama section: Add Configuration → a throwaway name (Local Ollama), leave API Key blank, and keep Base URL at the default (http://localhost:11434).

Next, hit Models to open the model discovery UI. It lists every model visible to ollama list as checkboxes, so:

- Model Type:

Language→ checkqwen3.6:35b→ Add - Model Type:

Embedding→ checkbge-m3:latest→ Add

My existing Ollama didn’t have an embedding model, so I pulled bge-m3. It’s a BGE-family multilingual embedding model with Japanese support, 1024-dim output. open-notebook registers Language and Embedding models separately, so vision/text LLMs alone won’t give you a working RAG pipeline.

Finally, at the top of the page under Default Model Assignments, hit Auto-assign Defaults. The registered models get wired into chat, embedding, transformation, and tools slots automatically.

TTS/STT stay blank because Ollama doesn’t support them. The podcast feature requires TTS, so I gave up on it.

Feeding an article into a notebook

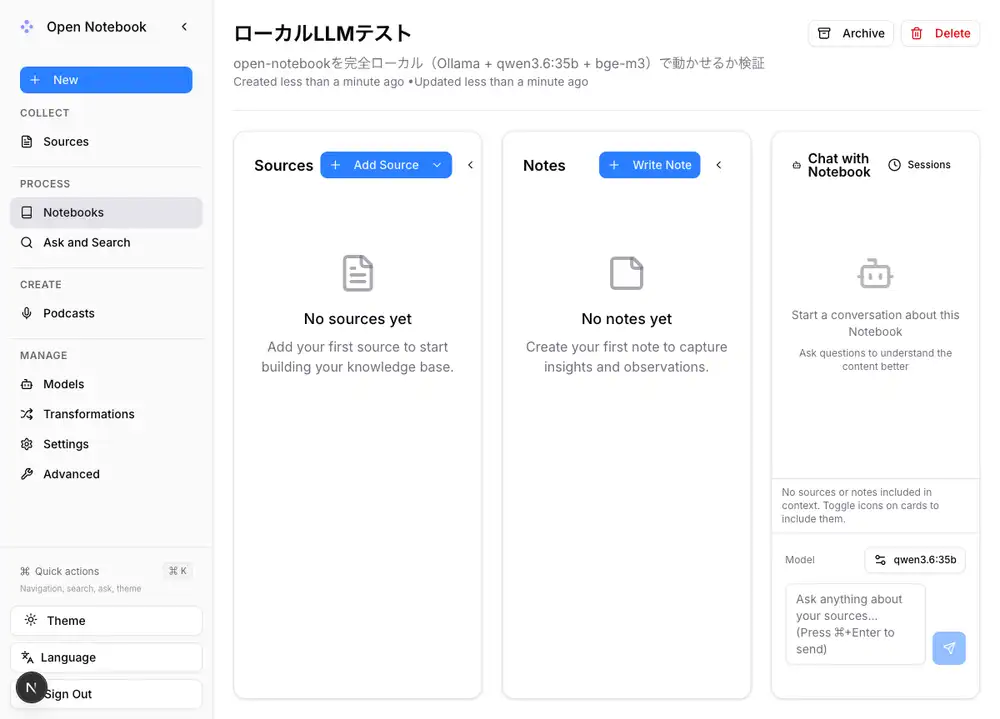

Notebooks → New Notebook gives you a blank notebook in a three-pane layout. Sources on the left, Notes in the middle, Chat with Notebook on the right. The chat panel shows the active model name at the bottom right — and yes, it’s qwen3.6:35b.

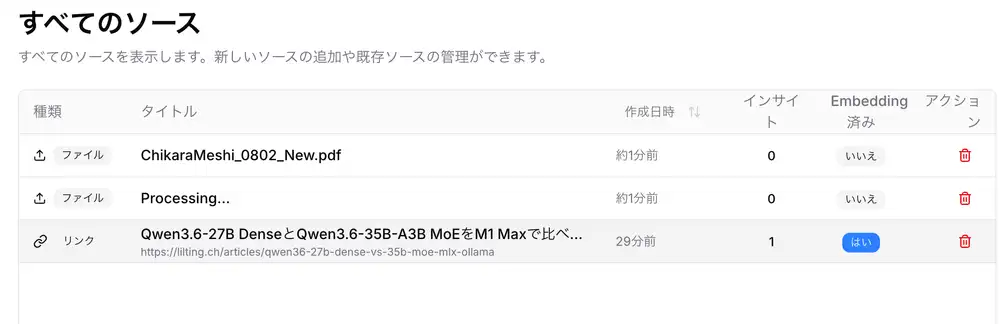

In Add Source → Add URL, I pasted the URL of my own Qwen3.6 comparison article.

https://lilting.ch/articles/qwen36-27b-dense-vs-35b-moe-mlx-ollamaTailing the worker log shows the processing flow.

Processing source with 1 notebooks

Submitting embed_source job for source source:wi9kimb151g4g0h1y0o0

Created 20 chunks for source (sizes: min=47, max=644, avg=404 chars)

Successfully embedded source: 20 chunks in 2.59s

Successfully created insight source_insight:zo13w52zo7ly3gfpg6rn in 0.07s

Successfully embedded insight in 0.41s20 chunks embedded via bge-m3 in 2.59 seconds. Then qwen3.6:35b generates the insight (summary). That step takes the longest.

ollama ps shows both models sitting in VRAM.

NAME SIZE PROCESSOR CONTEXT UNTIL

qwen3.6:35b 30 GB 100% GPU 128000 4 minutes from now

bge-m3:latest 1.3 GB 100% GPU 8192 3 minutes from nowAbout 31GB combined. Still plenty of headroom on an M1 Max 64GB. UNTIL is the keep-alive — both models stay resident on the GPU, ready for the next request.

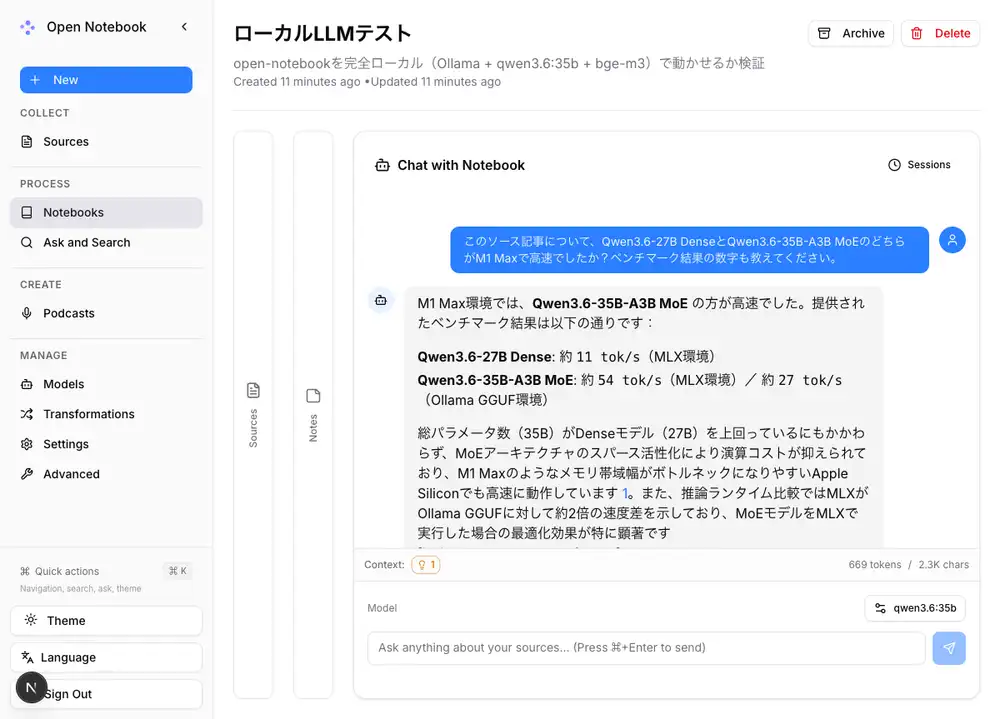

Asking qwen3.6:35b about its own benchmark

I typed a question into the chat pane.

Regarding the source article: which was faster on M1 Max, Qwen3.6-27B Dense or Qwen3.6-35B-A3B MoE? Please give me the benchmark numbers too.

The response that came back:

On M1 Max, Qwen3.6-35B-A3B MoE was faster. Here are the benchmark results provided:

- Qwen3.6-27B Dense: about

11 tok/s(MLX)- Qwen3.6-35B-A3B MoE: about

54 tok/s(MLX) / about27 tok/s(Ollama GGUF)Even though the total parameter count (35B) is higher than the Dense model (27B), MoE’s sparse activation keeps per-token compute low, so it runs faster even on Apple Silicon where memory bandwidth tends to be the bottleneck[1]. The inference runtime comparison also shows MLX roughly 2x faster than Ollama GGUF, with the optimization benefit especially pronounced when running MoE models on MLX.

References: [1] - source:wi9kimb151g4g0h1y0o0

The numbers match the source article’s benchmark table exactly (original benchmark table). The sparse activation explanation is grounded in the article text, and it even picks up on the Apple Silicon memory bandwidth bottleneck. It properly attaches a source ID citation at the end.

In other words, qwen3.6:35b read the benchmark article about itself and correctly described its own performance. The model can’t have known about itself at training time, so it’s reaching for the current article via RAG.

Zero API keys, zero network packets escaping localhost:11434 on Ollama. Nothing leaves for any provider or the author’s server.

PDFs work too

Beyond URLs, you can also upload files directly through the Upload File tab. I uploaded a local PDF (my own doujinshi data) as a test, and it pulled the body text just fine.

Open the Content tab and you can see the extracted text from the PDF, treated the same as URL ingestion. Embedding and insight generation flow through the same path afterward.

The PDF parser ships inside content-core. No extra setup needed.

So that Next.js proxy doesn’t reject large uploads, the frontend has proxyClientMaxBodySize: "100mb" set. Bump this if you want to feed it huge PDFs or video files.

Setup takeaways so far

For the chassis side of things, these are the points worth holding onto.

| Item | Observation |

|---|---|

| The real Docker dependency | SurrealDB alone. Install v2 natively and the whole Docker layer comes off. open-notebook itself is just a plain Python + Node + JavaScript web app |

| tmux 4-pane operation | Pure habit. Script it and startup beats Docker Compose (no image pulls) |

| A dedicated embedding model | Required. You can technically call embed APIs on a VLM or text LLM, but the results aren’t usable for RAG distance calculations. 1.2GB of bge-m3 is a rounding error |

| First-time insight generation | Slow. Loading qwen3.6:35b eats tens of seconds. Subsequent calls are fast thanks to keep-alive residency |

| Podcast generation | Give up. Ollama has no TTS. If full-local is the priority, you drop this feature |

That covers the open-notebook application side. From here on it’s about the models you load into it.

qwen3.6:35b hangs on chat responses

After feeding it the PDF, I casually asked “give me a quick summary of this PDF” and got no response. I tried twice more, and both times the spinner just kept spinning.

To isolate the problem, I hit Ollama’s API directly, bypassing open-notebook.

curl -sS -m 180 http://localhost:11434/api/chat -d '{

"model":"qwen3.6:35b",

"messages":[{"role":"user","content":"What is 1+1?"}],

"stream":false,"options":{"num_predict":500}

}'

# curl: (28) Operation timed out after 180003 milliseconds with 0 bytes receivedThree minutes in and zero bytes. Same silence when going through Ollama directly.

I timestamped the runner process’s CPU/RAM.

| Elapsed | Runner CPU | Runner RAM | State |

|---|---|---|---|

| t=5s | 41.6% | 45.8% | Loading model into VRAM |

| t=15s | 0% | 45.8% | Loaded; then silent |

| t=45s | 0% | 45.8% | Still silent |

| t=105s | 0% | 45.9% | Still silent, heading toward timeout |

CPU is 0% but the fan on the Mac is screaming. Apple Silicon GPU usage doesn’t show up in ps, but the symptom points to the Metal-side generation kernel spinning without ever signaling completion. On the API side, the Python process was stuck on __ulock_wait (waiting on a mutex).

As a control, I hit the same Ollama with gemma3:4b:

response: '1 + 1 = 2\n'

eval: 9 tokens, 0.1 s

load: 2.6 s

total: 3.0 s3 seconds. Ollama itself is alive, so the hang is specific to qwen3.6:35b.

Trigger conditions

I couldn’t pin down a deterministic repro, but the pattern feels like this.

- Triggers right after PDF insight generation (an internal ~160s long generation)

- Once stuck, even a full Ollama.app restart doesn’t recover (only qwen3.6:35b — gemma3:4b still responds instantly)

- Unloading the model with

keep_alive:0and reloading doesn’t help either

The same qwen3.6:35b was running at 27 tok/s on this morning’s Ollama benchmark article, so short one-shot prompts are fine. Whether a long insight-scale generation breaks some Metal/Ollama state, or there’s some other trigger entirely, isn’t clear.

Workaround

The simple workaround is swapping the chat model. open-notebook lets you assign chat, transformation, tools, and embedding to different models, so:

- Chat Model:

gemma3:12b(immediate response, stable on Ollama) - Transformation Model (insight generation): keep qwen3.6:35b — this runs in the background so slow is tolerable

- Embedding Model:

bge-m3unchanged

Split roles like that and the perceived latency changes completely. You can do it just by swapping Chat Model alone in open-notebook’s Settings → Models → Default Model Assignments.

Retest after the swap

I pointed /api/models/defaults at gemma3:12b for the chat model (leaving transformation on qwen3.6:35b) and re-sent the same “give me a quick summary of this PDF” against the same PDF (ChikaraMeshi_0802_New.pdf, my own doujinshi data).

The response:

This PDF [source:n6xfz2tkhjddcrrxdggg] is a cultural/sociological consideration of the Tokyo beef bowl chain “Rikihan (Chikara-meshi)”.

Here’s the summary.

- What Rikihan is: A short-lived chain that serves grilled beef bowls — a phenomenon symbolizing modern meat food culture and the evolution of donburi cuisine.[insight:1dtfth4oe3nhafa6m1xn]

- Lifecycle: Rapid expansion, quality divergence, corporate acquisition, and business-model pivot cycle.[insight:1dtfth4oe3nhafa6m1xn]

- Menu: Two grilling styles — “barabok” on iron grating and “komameku” on an iron plate — each with its own sauce.[insight:1dtfth4oe3nhafa6m1xn]

- Evaluation criteria: Uses its own metrics to score the texture of the meat, sauce penetration, rice quality, service speed, location, and so on.[insight:1dtfth4oe3nhafa6m1xn]

- Cultural meaning: Origin of internet memes, food-crawl tours, and a record of a near-extinct cultural heritage.[insight:1dtfth4oe3nhafa6m1xn]

- As of when: Data as of July 31, 2015.[insight:1dtfth4oe3nhafa6m1xn]

This document is an attempt to record Rikihan not merely as a restaurant but as a transient cultural phenomenon.[insight:1dtfth4oe3nhafa6m1xn]

Against the 3-minute silence from qwen3.6:35b, gemma3:12b came back in seconds. But the response doesn’t quite line up with what the PDF actually says, so I went back to the source text and diffed.

Checking gemma3:12b’s answer against the source

The PDF was yakigyu August 2015 issue, which I published at C88 in 2015 — a food-themed doujinshi I made chasing Tokyo Chikara-meshi. It’s still available on Booth and Melonbooks, if you feel like tolerating an abrupt plug. Back to the topic.

| gemma3’s claim | What the PDF actually says | Verdict |

|---|---|---|

| ”Two grilling styles" | "There are two kinds of grilled beef bowls? The traditional type and the new type (new grilled beef bowl)” | ✅ matches |

| ”Data as of July 31, 2015" | "As of July 31, 2015” | ✅ matches |

| ”Corporate acquisition” / “business-model pivot" | "MK acquired Kobe Lampu-tei on March 25, 2015” / “all Chikara-meshi stores underwent a business-model pivot” | ⚠️ the words are there, but the subject is shifted — the model reads them as Chikara-meshi’s own lifecycle |

| ”Rapid expansion, quality divergence” | Store history timeline and per-store quality variations exist | ✅ grounded |

| ”Iron grating ‘barabok’” / “iron plate ‘komameku’" | "Bara-niku (belly meat) on ami-yaki (wire-net grill)” / “Koma-niku (chunks) on an iron plate (frying pan)” | 🚨 word-coinage. bara-niku → barabok, koma-niku → komameku, ami-yaki → iron grating |

| ”Rikihan (Chikara-meshi)“ | The body text only uses “Chikara-meshi” / “Tokyo Chikara-meshi” | 🚨 looks like a back-translation from the filename ChikaraMeshi |

| ”Cultural-anthropology consideration” / “origin of internet memes” / “near-extinct cultural heritage” | No such wording in the document | 🚨 whole framing fabricated |

Facts are there. Specific numbers and dates match. But it coins its own proper nouns (“bara-niku” → “barabok”, “ami-yaki” → “iron grating”) and tacks on grandiose framings that aren’t in the source. Calling this hallucination undersells it; coinage-symptom is closer. Any time the original word would do, it adds a twist.

With that behavior, search and quotation use are off the table. If you want to grep the PDF’s contents via the model, but all the proper nouns have been coined into new shapes, you can’t search the original document for whatever the answer used.

Comparing other models side by side

Leaving the investigation at gemma3:12b felt half-finished, so I lined up every Japanese-capable chat candidate in my Ollama and pointed each one at the same PDF. Same question every time — “give me a quick summary of this PDF” — each from a fresh chat session.

The Chat Model in open-notebook can be swapped via /api/models/defaults from the API. Useful when the UI selector gets truncated by a long model name.

curl -sS -X PUT http://localhost:5055/api/models/defaults \

-H "Content-Type: application/json" \

-d '{"default_chat_model":"model:<id>"}'Existing sessions stick to their own chat_session:xxxx-level model_override, so I rewrote those directly too.

curl -sS -X PUT http://localhost:5055/api/chat/sessions/<sid> \

-H "Content-Type: application/json" \

-d '{"model_override":"model:<id>"}'Results

| Model | Size | Response | Info density | Shared coinages (barabok / iron grating) | New coinages | Fine-grained extraction |

|---|---|---|---|---|---|---|

| qwen3.6:35b | 23GB MoE | 🚨 hang | — | — | — | — |

| gemma3:12b | 8.1GB | ✅ | medium | both 🚨 | — | Catches acquisition/pivot but with subject shifted |

| gemma3:4b | 3.3GB | ✅ | low | barabok ✅ avoided, iron grating 🚨 | — | Picks up the soy/miso sauce distinction |

| gemma4:e4b | 9.6GB | ✅ | low | same as above | — | Byte-for-byte identical output to gemma3:4b |

| Qwen3-Swallow-30B-A3B (Japanese LLM roundup) | 18GB MoE | ✅ | high | both 🚨 | 🚨 “yaki-niku-don” | Extracts the 30/60 scoring from the shops, infers Kanto/Kansai/Chugoku regional distribution |

| qwen3.5-abliterated (abliterated walkthrough) | 17GB | ✅ | highest | both 🚨 | 🚨 “Makko-sha” | Picks up the rice blend and the inconsistent serving-bowl sizing |

| qwen3.5:35b | 23GB MoE | ✅ | highest | both 🚨 | 🚨 “yaki-gyuu-niku-no-donburi” | Nearly identical content to abliterated, in numbered list form |

| LLM-jp-4-32B-A3B-thinking (ROCm benchmark, mmnga-o GGUF) | 21GB MoE | 🚨 garbled output | — | — | — | — |

| LLM-jp-4-32B-A3B-thinking (alfredplpl GGUF) | 18GB MoE (MXFP4) | 🚨 garbled output | — | — | — | — |

Observations

- The source of the shared coinages is probably the insight side. “barabok”, “komameku”, and “iron grating” show up in gemma, Swallow, and the qwen3.5 family alike. The most consistent hypothesis is that once those expressions entered the insight (the summary generated by qwen3.6:35b at PDF ingest), every downstream chat model sees them in its input and propagates them regardless of which model is asked

- Each model also invents its own new coinages. Swallow gives you “yaki-niku-don”, qwen3.5-abliterated gives you “Makko-sha” (a mangling of the real MK Corporation), and qwen3.5:35b gives you “yaki-gyuu-niku-no-donburi”. Insight isn’t the only contamination source — the chat model adds more on top

- The Qwen3.5 family (Swallow, abliterated, plain 3.5) pulls more information. Things like the 30/60 total scores per shop, the rice blend description, the inconsistent serving-bowl sizing, and the “first FC store” trivia — details not present in the insight — all surface. BGE-m3’s vector search is hitting source chunks directly

- Gemma plays safe and extracts less. The tiny 4B/e4B models actually skip the “barabok” coinage, suggesting information depth and coinage risk are a trade-off

- gemma3:4b and gemma4:e4b returned byte-for-byte identical outputs. Given the same 433-token insight at the same temperature, they converge to the same output across a generational jump (implying Gemma-family Japanese tokenization behavior is very similar across versions)

- LLM-jp-4 is effectively uncallable via Ollama right now. Both the mmnga-o and alfredplpl conversions, when hit through

/api/chat, return garbled token streams with interleaved control characters like\x01and\x08. Send a raw prompt through/api/generateand it produces clean Japanese, so the model weights themselves are fine. The chat template (or special token definition) embedded in the GGUF is out of sync with Ollama’s llama.cpp. Generation speed is around 67 tok/s, which makes it all the more frustrating

What to pick

Balancing speed, accuracy, and stability, this is the shape of it.

| Use case | Pick |

|---|---|

| Just want a response, content can be loose | gemma3:12b |

| Want Japanese fine details, a rough summary won’t do | Qwen3-Swallow-30B-A3B or qwen3.5:35b |

| Want the latest Qwen | Find a configuration where qwen3.6:35b doesn’t hang (wait for the next Ollama update?) |

| Want a fully domestic option | Wait for Ollama compatibility on LLM-jp-4 (or hit it through MLX directly) |

The coinage issue survived every model. Switching to pure vector-search mode without insight, or changing the model that generates the insight, might help, but that’s homework for next time. The conclusion this article lands on is: running it fully locally is realistic, but model choice is the watershed for practical use.

This isn’t the first time model swap mechanics have shown up on the site, either:

- Swapping Qwen 2.5 for Qwen 3.5 on NDLOCR to compare OCR accuracy

- Digging into why Qwen 3.5 failed completely on Radeon 8060S — all the way down to the AMD driver

- Ollama’s move to an MLX backend and the huge boost for Apple Silicon inference

- Running a local VLM to extract RPG-style stats from a character image

Reading these alongside this article gives a fuller picture of “which model is good at what” and “why do they want to re-coin proper nouns”. open-notebook itself works roughly without complaint; the bottleneck has shifted to the model you load into it — that’s the takeaway after everything. So yes, the M1 Max on my desk can now answer questions about its own articles, notes, and PDFs without letting any data escape.