AnimeGamer: AI That Understands Game State to Generate Anime Videos

Contents

Contents

AnimeGamer, jointly developed by Tencent ARC Lab and City University of Hong Kong, is a model specialized for anime-style game simulation video generation. The research was accepted to ICCV 2025 and takes a fundamentally different approach from general-purpose video generators like Sora and Runway.

What makes it different

Typical video generation models are general tools for producing videos from text. AnimeGamer, by contrast, is specialized for generating video while understanding game state.

| Aspect | AnimeGamer | Sora / Runway and similar models |

|---|---|---|

| Focus area | Life simulation for anime-style games | General-purpose video generation |

| State tracking | Integrates game-state prediction | None |

| Context handling | Explicitly incorporates previous frames | Weaker continuity between frames |

Demo videos featuring Studio Ghibli characters

The published demos use characters from the following Studio Ghibli works:

- Ponyo: a clip of Sosuke exploring a game world

- Kiki’s Delivery Service: a clip of Kiki flying through the sky

- Castle in the Sky: a clip featuring Pazu

One interesting detail is that it can generate videos where characters from different anime works appear in the same world. For example, it can produce a clip where Pazu flies on Kiki’s broom.

That said, this is not an interactive real-time game. You provide instructions in text, and the system generates matching video clips.

Character state management

Like a game system, AnimeGamer gives characters multiple status values:

- Stamina: consumed by actions and recovered through rest

- Social value: changes through interactions with other characters

- Entertainment value: rises through enjoyable activities

These values affect how the simulation progresses and help maintain consistency.

Technical features

Next Game State Prediction

The model generates both character motion and state transitions at the same time. In an RPG-style setting, for example, it can track position, HP, and action state while generating the corresponding animation.

Maintaining contextual consistency

Past frame information is explicitly incorporated to preserve scene-level continuity. This helps reduce a common failure mode in video generation, where a character’s appearance changes midway through the clip. Elements like a purple car or a forest background stay consistent across multiple turns.

Using a multimodal LLM

The system combines text prompts with visual context from previous frames to recognize and generate actions. It is not just text-to-video. It tries to generate with an understanding of game-like context.

Trying it yourself

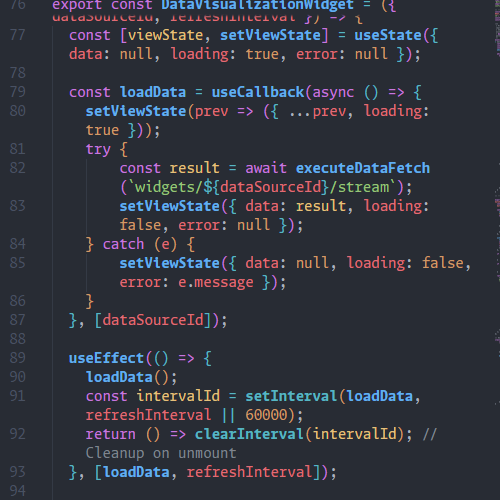

You can run a local Gradio demo. The requirements are fairly heavy, but if you are interested, it is available on GitHub.

python app.py